Listen to the companion Sparks + Embers episode for this Kindling feature article below.

This article is part if our Goodpain Guide to Authentic Human Learning series which is part of our content that focuses on Contemplation & Reflection, one of our Goodpain Pillars.

Our next article will be available the week of 21 July 2025.

AI Decision Fatigue is what happens when we start surrendering our judgment to algorithms without realizing it, then find ourselves exhausted by having to constantly choose between what the AI suggests and what we actually think. Discover four stages of intellectual surrender and the practices that preserve cognitive vitality while collaborating with artificial intelligence

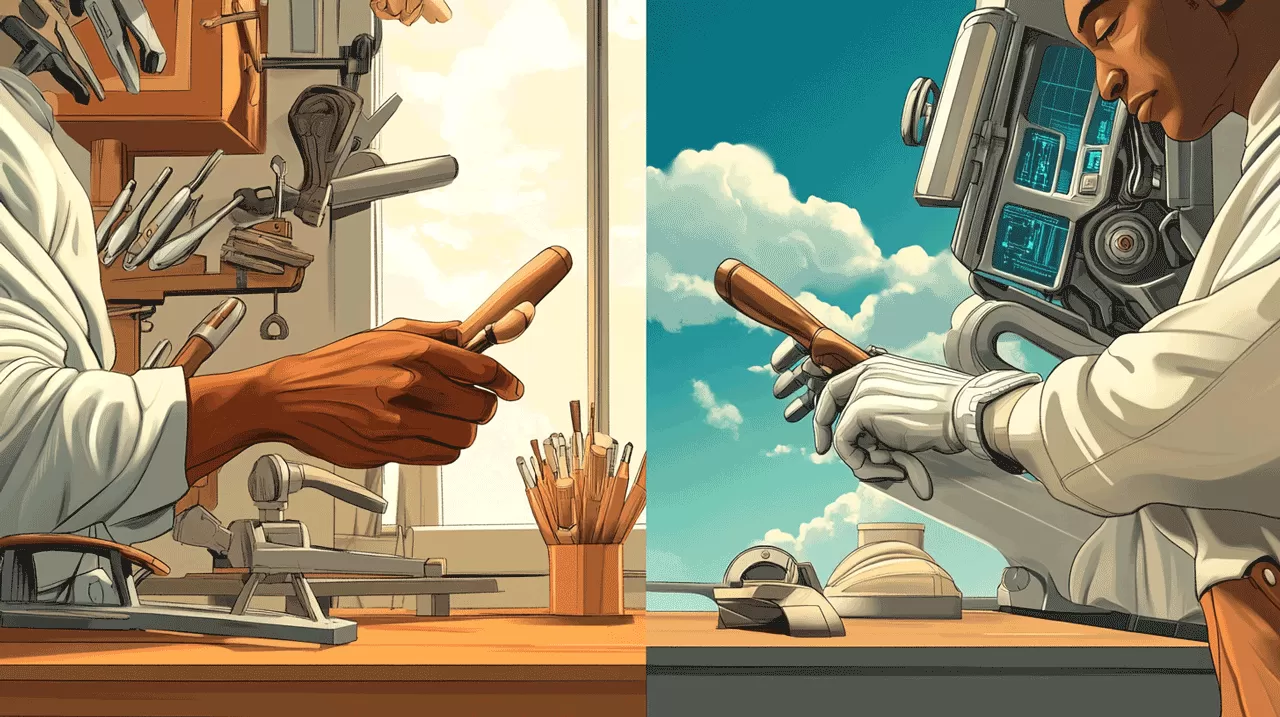

The Cabinet Project: When Smart Tools Enable Cognitive Surrender

I was building a small cabinet to replace a store-bought unit we used for managing my daughter’s medications – a station for organizing her prescriptions and storing nursing documentation. The project demanded precision; her care depended on systematic organization.

I had followed a disciplined process. Sketching first, then small-scale models, full-sized drafting plans, lumber requirements, cut lists. Every step deliberate, thorough – a formula I could trust. The process promised success through methodical execution.

All that planning culminated in the first cut. Then the second, followed by continuous checks between human judgment and machine precision. At no point were the plans alone sufficient to build the cabinet.

I was surrounded by other apprentices who swung between two extremes. On one side, trial-and-error improvisers jumped from rough sketches straight to milling lumber. I watched one student make multiple trips to the mill, create avoidable waste, encounter frustrations he hadn’t anticipated.

On the other side, perfection-seekers believed they could anticipate every variable to the most minute detail. They were shocked when they followed their plans precisely or trusted machines for zero-tolerance results, and problems still emerged. They had outsourced their thinking to a myth of perfection.

Throughout the project, I found myself swinging between these extremes. Each time I struggled at either end, I faced the same realization: I was surrendering my autonomy to a myth. The perfectionist myth promises pain-free existence with smooth sailing. The improviser myth suggests everything will fall into place without risking time, effort, and focused attention.

Both myths seduce us into abandoning what Dietrich Bonhoeffer called “inner independence” – our capacity to maintain an autonomous position when facing external pressures. As he observed, “Under the overwhelming impact of rising power, humans are deprived of their inner independence and give up establishing an autonomous position.”

The cabinet project taught me something profound about our current moment with artificial intelligence. We face the same fundamental choice: maintain cognitive authority while leveraging sophisticated tools, or surrender our judgment to systems that promise perfect solutions.

Unlike a digital caliper that simply measures, AI appears to understand, reason, and persuade. This creates unprecedented opportunities for intellectual surrender – not because we lack intelligence, but because intelligence alone doesn’t protect us from the deeper pattern Bonhoeffer identified.

The question isn’t whether we’re smart enough to avoid this trap. The question is whether we can maintain our role as Editor-in-Chief of our own minds.

How We Lose What Makes Us Human: The Four Stages of Surrender to AI Decision Fatigue

The surrender of independent thought follows predictable patterns. Understanding these stages helps us recognize intellectual surrender before it becomes complete. Each stage represents a deeper level of cognitive delegation, moving from editorial control to agency itself.

Stage 1: Editorial Surrender – When We Stop Questioning

The first stage begins when we accept the first plausible response rather than iterating toward insight. When I query an AI system, editorial surrender looks like treating the first coherent output as sufficient. We become consumers of information rather than editors of it.

The perfectionist approach to my cabinet project exemplified this pattern – accepting the plans as absolute truth rather than treating them as working hypotheses. Each measurement became disconnected from the actual flow of construction, creating a false sense of control over an inherently dynamic process.

What we surrender here is editorial control. We stop asking whether something is not just accurate but wise, not just efficient but appropriate. We delegate the crucial human function of judgment.

Stage 2: Reality Testing Surrender – Trusting Algorithms Over Experience

Jean Baudrillard warned decades ago that “the map precedes the territory” – that our models of reality would become more compelling than reality itself. In my workshop, this played out when the perfect plans for my cabinet became more trustworthy than the messy reality of wood and tools. The drawings promised a level of precision that the actual materials couldn’t deliver, yet I found myself trusting the simulation over the feedback from my hands.

In the age of AI, we see this when algorithmic recommendations feel more trustworthy than our own experience. We begin to trust what sounds coherent over what we can observe and verify ourselves. I watch this happen in workshops when students rely more on YouTube tutorials than on the feedback they’re getting from the wood itself. The mediated experience becomes more “real” than the immediate one.

The authority we lose is our capacity to distinguish between what sounds right and what is right. We begin living in a simulation that seems more coherent than the messy, contradictory experience of actual engagement.

Stage 3: Intellectual Courage Surrender – Using AI to Confirm Biases

Bonhoeffer observed that “intelligence becomes irrelevant when individuals surrender judgment to group consensus.” This stage involves using AI to validate existing beliefs rather than challenge them. I see this when people prompt AI systems to generate arguments supporting positions they already hold, rather than stress-testing their assumptions. The technology becomes a sophisticated echo chamber.

Social media algorithms have trained us for this surrender. We’re accustomed to having our preferences anticipated and our biases confirmed. When AI can provide more sophisticated versions of this confirmation, intellectual courage atrophies.

What we lose is our willingness to be wrong, to change our minds, to hold uncomfortable tensions. We become approval-seekers rather than truth-seekers.

Stage 4: Decision-Making Surrender – From Agency to Automation

Carlo Cipolla’s laws of human stupidity provide the framework for understanding this final stage. Cipolla defines stupidity not as lack of intelligence but as “causing losses to others while deriving no gain and even possibly incurring losses.”

When we treat AI as an oracle rather than a thinking partner, we often create exactly this dynamic. We cause intellectual harm – to ourselves and others – while believing we’re helping. This happens when we delegate decisions that require human judgment to systems that excel at pattern recognition but lack wisdom. We create what looks like efficiency but represents institutionalized stupidity.

The authority we surrender is agency itself. We become order-takers rather than directors of our own cognitive development.

The Editor-in-Chief Principle: Maintaining Cognitive Authority

Understanding how we surrender cognitive authority naturally leads to the question of how we maintain it. The answer lies in what I call the Editor-in-Chief principle – a way of engaging with powerful tools that preserves human agency while benefiting from technological capability.

Master craftspeople don’t just use better tools; they maintain creative authority over their work. The tool serves the vision, not the reverse. When I watch a master woodworker, I see someone in constant dialogue with both their tools and their materials. They’re making hundreds of micro-decisions that no tool could make for them.

Here’s the paradox we face with artificial intelligence: to use it effectively, we must remain the authority in the relationship. But AI’s sophistication constantly tempts us to surrender that authority. The stakes are higher than they might initially appear. As Bonhoeffer observed, “The power of the one needs the stupidity of the other.” If we surrender our cognitive authority to systems we don’t understand, we risk becoming what he called “mindless tools.”

I’ve learned to distinguish between two fundamentally different ways of engaging with AI. Transactional AI use treats the system as an oracle – “Give me the answer” – and surrenders editorial control by accepting whatever it produces. Editorial AI use treats the system as a sophisticated thinking partner – “Help me think through this” – while retaining final judgment.

The difference isn’t in the technology; it’s in our approach. When I use AI to help write, I can either ask it to write something for me (transactional) or ask it to help me think through what I want to say (editorial). In the first case, I’m outsourcing the thinking. In the second, I’m augmenting it.

This distinction becomes crucial when we consider embodied knowledge – understanding that lives in the full sensory-motor engagement with reality. There’s a scene in my workshop that repeats weekly. A student will run their hand along a board and immediately know something’s wrong – the grain feels off, there’s tension in the wood, something isn’t quite right. They can’t articulate what they’re sensing, but their hands are reading information their eyes missed.

This embodied knowledge can’t be digitized or downloaded. It develops only through sustained, direct engagement. When we rely on external systems to navigate, our spatial reasoning weakens. When we outsource memory to devices, our internal memory systems atrophy. The problem arises when the delegation happens unconsciously, and we lose capacities we didn’t realize we were trading away.

I think of it as the difference between “knowing about” and “knowing through.” AI can help us know about things with unprecedented depth and breadth. But it can’t help us know through them – that requires what I call “contemplative lingering.”

Reading the Signs: Recognizing Intellectual Surrender

The challenge of maintaining cognitive authority requires the ability to recognize when we’re surrendering it. How do we know when we’re appropriately delegating routine tasks versus surrendering intellectual authority? The distinction becomes clear through what I call “authority check questions” and observable warning signs.

The most telling indicator is automation bias – overriding correct personal decisions based on algorithmic suggestions. This shows up as digital dependency, where we experience noticeable decline in performance when external systems aren’t available. We might find ourselves using AI to validate existing beliefs rather than test them, or treating AI outputs as authoritative rather than provisional.

The workshop provides a useful diagnostic: Can the apprentice work effectively when the master isn’t present? Applied to cognitive work, this becomes: Can I think well when my usual AI tools aren’t available? Am I directing this interaction or being directed by it? Would I be able to evaluate this without AI assistance? Am I enhancing my judgment or replacing it?

Master craftspeople can distinguish between apprentices who are developing skill and those who are becoming dependent on external support. True competence maintains agency even when leveraging external tools. The difference between using tools and being used by tools becomes apparent under pressure. This recognition prepares us for the active work of preserving cognitive vitality.

The Four Preservation Practices: Rebuilding Cognitive Vitality

Recognizing intellectual surrender naturally leads to the question of how to counter it. The four preservation practices work together to maintain cognitive authority while benefiting from AI collaboration. Each practice addresses a specific aspect of the surrender dynamic while building overall intellectual resilience.

Practice No. 1: Editorial Discernment – Sophisticated Evaluation

The first preservation practice involves sophisticated evaluation of information sources and AI outputs – not cynical distrust, but what I call “source skepticism.” In building the cabinet, I learned to treat my detailed drawings as hypotheses rather than absolute truth. The measurements guided my cuts, but the actual wood grain and the fit of joints provided the final verdict. Each piece taught me something the plans couldn’t predict.

Applied to AI interaction, this means learning to direct AI queries rather than accept first responses. When I work with AI systems, I’ve learned to treat initial outputs as rough drafts requiring editorial review. This goes deeper than fact-checking. It involves what the craftsperson develops through experience – the ability to sense when something “feels right” even if we can’t immediately articulate why.

Well-designed AI interactions can enhance our discernment by making our reasoning processes explicit, but only if we maintain editorial authority throughout the process. This practice requires intellectual honesty – the willingness to distinguish carefully between what we’ve verified and what we’re assuming, even when uncertainty makes our conclusions less compelling.

Practice No. 2: Cognitive Authority – Strategic Engagement with Difficulty

The second practice involves strategic engagement with difficulty. Not all cognitive load is created equal – some builds capacity, some depletes it. The cabinet project required constant shifts between detailed planning and real-time adaptation. I learned to reserve mental energy for the decisions that required human judgment while delegating routine measurements to tools.

Productive struggle occurs at the edge of our current capability – challenging enough to promote growth but not so overwhelming as to shut down learning. Destructive struggle occurs when we’re missing foundational knowledge or dealing with poorly designed tasks.

Applied to AI collaboration, this means maintaining enough cognitive capacity to edit and improve AI output rather than simply accepting it. This requires understanding our own cognitive rhythms and managing AI interaction accordingly. Some challenges are worth embracing because they build transferable skills. Others should be delegated or eliminated. The craftsperson’s judgment involves knowing the difference.

This practice requires intellectual tenacity – the persistence to work through hard problems systematically while remaining open to changing our approach when evidence demands it.

Practice No. 3: Independent Verification – Building Internal Standards

Every cut in the cabinet project required verification from two sources – the precision of my measurements and the response of the wood itself. Neither alone was sufficient; both together created reliable feedback loops. This practice involves developing internal standards of excellence that don’t depend on external confirmation.

The most sophisticated application involves using AI tools to expose our own blind spots and biases, rather than seeking confirmation of our existing beliefs. Can we evaluate AI output against reality and other sources rather than simply trusting its internal coherence? Internal standards of excellence emerge through sustained practice and attention to what works and what doesn’t, independent of external metrics or social approval.

This practice requires intellectual humility – recognizing the boundaries of our knowledge while maintaining confidence in what we do know well, and being clear about the difference.

Practice No. 4: Productive Discomfort – Embracing Uncertainty

The fourth practice involves the willingness to sit with uncertainty, contradiction, and complexity without rushing toward premature closure. The cabinet project forced me to make decisions without complete information. The wood grain wouldn’t reveal all its secrets until I started cutting. The joinery couldn’t be tested until assembly. Learning to work skillfully with uncertainty became as important as technical precision.

When apprentices want to use power tools for every task, I often insist they complete projects with hand tools first. The additional difficulty develops sensitivities and skills that transfer to all subsequent work. Applied to AI collaboration, this might mean working through problems manually before seeking AI assistance, or deliberately engaging with perspectives that challenge our assumptions.

AI systems excel at providing coherent-sounding answers to complex questions. Intellectual resilience involves maintaining comfort with partial knowledge and provisional conclusions. This practice requires intellectual courage – the willingness to follow evidence and reasoning even when it threatens our comfort, status, or relationships.

The Character Foundation: A Preview of What’s to Come

The preservation practices I’ve outlined depend on something deeper than technique – they require character. Just as sharp chisels demand respectful attention from the craftsperson, powerful AI tools demand specific virtues from their users. Each stage of intellectual surrender represents a character failure, and each can be countered by cultivating what I call the thinking virtues.

When I watch apprentices working in groups, I can predict who will maintain their standards under social pressure and who will conform to group expectations. The difference isn’t confidence – it’s intellectual courage. This virtue directly counters the social surrender where we use AI to validate existing beliefs rather than challenge them. In our current moment, when sophisticated systems can provide convincing justifications for whatever we want to believe, true courage means directing these tools toward truth-seeking rather than comfort-seeking.

Similarly, I can spot students who’ve developed what I call “tool humility” – they know the capabilities and limitations of their equipment. Applied to thinking, this becomes intellectual humility: recognizing the boundaries of our knowledge while maintaining confidence in what we do know well. This counters the reality testing surrender where algorithmic recommendations feel more trustworthy than our own experience.

The cabinet project taught me the difference between stubbornness and intellectual tenacity. Tenacity means persistently working through problems systematically, trying different approaches while maintaining commitment to quality outcomes. This counters decision-making surrender, where we delegate decisions that require human judgment to systems that excel at pattern recognition but lack wisdom.

Finally, the wood doesn’t lie. If your joint is loose, you feel it immediately. This immediate feedback develops intellectual honesty – you can’t maintain false beliefs about your work because reality corrects them quickly. Digital environments often lack this immediate feedback, making intellectual honesty more important and more difficult. This virtue counters editorial surrender, where we accept the first plausible response rather than iterating toward insight.

I’ve noticed that craft develops character alongside skill. The discipline required to work carefully with sharp tools parallels the discipline required to think carefully with powerful ideas. Both require respectful attention – the recognition that we’re working with forces that can create or destroy.

These thinking virtues aren’t just abstract ideals – they’re practical capacities that improve all our cognitive work, including our collaboration with AI systems. They develop through daily practices: taking time to understand rather than rushing to judgment, seeking out disconfirming evidence, admitting when we don’t know something, persisting through difficult problems.

In our next article, we’ll explore these thinking virtues in greater detail, examining how they develop through practice and how they protect us from the sophisticated forms of bias that emerge when intelligent systems amplify our existing blind spots. We’ll discover that the real challenge isn’t just avoiding individual cognitive errors, but building the character infrastructure that allows us to think well together in communities where both human and artificial intelligence shape our understanding.

The Daily Choice: What We Risk Losing

We stand at a threshold that demands conscious choice. AI can enhance human thinking or replace it. The determining factor isn’t the technology itself – it’s whether we maintain our role as Editor-in-Chief of our own minds.

The cabinet project revealed the essential dynamic: sophisticated tools can amplify our capabilities or substitute for them, depending on how we engage. The apprentices who flourished learned to maintain creative authority while leveraging external precision. Those who struggled surrendered their judgment to either perfectionist planning or improvised intuition.

Bonhoeffer’s insight remains relevant: “The power of the one needs the stupidity of the other.” If we surrender our cognitive authority to systems we don’t understand, we risk becoming what he called “mindless tools” – able to follow sophisticated instructions but unable to evaluate whether those instructions serve human flourishing.

Every AI interaction presents the same choice the apprentice faces with power tools: will we direct the technology toward our vision, or will we let the technology direct us? The path forward isn’t to avoid these powerful tools – that would be both impractical and unnecessary. The path is to maintain cognitive ownership while benefiting from technological augmentation.

This requires the willingness to slow down enough to understand what we’re doing and why. It requires the capacity to maintain our own standards even when external systems offer apparently better alternatives. Most importantly, it requires the daily practice of thinking well – not just efficiently, but wisely.

The choice is ours to make, and we make it new each day. The question isn’t whether we’re smart enough to navigate this challenge – intelligence alone won’t save us. The question is whether we’re wise enough to preserve what makes us human while embracing what makes us more capable.

In the workshop of ideas, mastery means knowing when to trust the tool and when to trust yourself. That discernment – developed through practice, tested through difficulty, refined through community – remains irreplaceably human. Our humanity isn’t threatened by AI’s capabilities. It’s threatened by our willingness to abdicate our own.

"I will use AI as a sophisticated tool while maintaining creative authority over my thinking. I will seek AI's assistance without surrendering my agency. I will collaborate with artificial intelligence while preserving my human intelligence."

The Editor-in-Chief Pledge

Research References

Here is a bibliography of the provided sources, including available hyperlinked text, formatted for a reference page:

Evaluate Your Sources – RDG 100 – Critical Thinking Analysis – SCC Research Guides at Spartanburg Community College” (Last updated Jan 23, 2025). Spartanburg Community College Library. Retrieved from https://libguides.sccsc.edu/rdg100-criticalthinking.Ali, Z., & Janarthanan, J. (2024, September 23). Understanding Digital Dementia and Cognitive Impact in the Current Era of the Internet: A Review. Cureus, 16(9), e70029. doi: 10.7759/cureus.70029. PMCID: PMC11499077. PMID: 39449887. Retrieved from https://www.ncbi.nlm.nih.gov/pmc/articles/PMC11499077/.

- Cited within source: Irazoki, E., Contreras-Somoza, L. M., Toribio-Guzmán, J. M., Jenaro-Río, C., van der Roest, H., & Franco-Martín, M. A. (2020). Technologies for cognitive training and cognitive rehabilitation for people with mild cognitive impairment and dementia. A systematic review. Frontiers in Psychology, 11, 648. doi: 10.3389/fpsyg.2020.00648.

- Cited within source: Manwell, L. A., Tadros, M., Ciccarelli, T. M., & Eikelboom, R. (2022). Digital dementia in the internet generation: excessive screen time during brain development will increase the risk of Alzheimer’s disease and related dementias in adulthood. Journal of Integrative Neuroscience, 21(1), 28. doi: 10.31083/j.jin2101028.

- Cited within source: Odgers, C. L., & Jensen, M. R. (2020). Annual research review: Adolescent mental health in the digital age: facts, fears, and future directions. Journal of Child Psychology and Psychiatry, 61(3), 336–348. doi: 10.1111/jcpp.13190.

- Cited within source: Preiss, M. (2014). Manfred Spitzer: digital dementia: what we and our children are doing to our minds. Cognitive Remediation Journal, 2014, 31–34. Retrieved from https://cognitive-remediation-journal.com/pdfs/crj/2014/02/04.pdf.

- Cited within source: Sparrow, B., Liu, J., & Wegner, D. M. (2011). Google effects on memory: cognitive consequences of having information at our fingertips. Science, 333(6040), 776–778. doi: 10.1126/science.1207745.

- Cited within source: The Think Pot. (2024). What is Digital Dementia and How to Prevent it? Experts Talk. Retrieved from https://thethinkpot.in/what-is-digital-dementia-and-how-to-prevent-it-experts-talk/.

Amusing Ourselves to Death: Public Discourse in the Age of Show Business. (2006). Neil Postman. New York: Penguin Books. (Original work published 1985). Retrieved from https://ia600101.us.archive.org/27/items/Various_PDFs/NeilPostman-AmusingOurselvesToDeath.pdf

Automation bias. (n.d.). In Wikipedia, the free encyclopedia. Retrieved from https://en.wikipedia.org/w/index.php?title=Automation_bias&oldid=1296428872.

Automation bias. (2020). C. Gratton (K. McClintock, Trans.). In E. Gagnon-St-Pierre, C. Gratton & E. Muszynski (Eds.), Shortcuts: A handy guide to cognitive biases Vol. 2. Retrieved from https://www.shortcogs.com.

- Cited within source: Dzindolet, M. T., Peterson, S. A., Pomranky, R. A., Pierce, L. G., & Hall, B. P. (2003). The role of trust in automation reliance. International Journal of Human-Computer Studies, 58(6), 697-718.

- Cited within source: Goddard, K., Roudsari, A., & Wyatt, J. C. (2010). Automation bias: a systematic review of frequency, effect mediators and mitigators. Journal of the American Medical Informatics Association, 19(1), 121-127.

Beyond Asch: Contemporary Research on Conformity and Independence. (n.d.). Psychology Town.. (No specific URL provided in the source excerpts).

ClinicalTrials.gov Glossary. (n.d.). ClinicalTrials.gov.. (No specific URL provided in the source excerpts).

Cognitive (Expectancy) Theory Of Addiction And Recovery Implications. (n.d.). MentalHealth.com.. (No specific URL provided in the source excerpts).

Copersino, M. L. (2017, February). Cognitive Mechanisms and Therapeutic Targets of Addiction. Current Opinion in Behavioral Sciences, 13, 91–98. doi: 10.1016/j.cobeha.2016.11.005. PMCID: PMC5461927. PMID: 28603756. Retrieved from https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5461927/.

- Cited within source: Foxall, G. R. (2016). Metacognitive Control of Categorial Neurobehavioral Decision Systems. Frontiers in Psychology, 7, 170. doi: 10.3389/fpsyg.2016.00170.

- Cited within source: Kerst, W. F., & Waters, A. J. (2014). Attentional retraining administered in the field reduces smokers’ attentional bias and craving. Health Psychology, 33(10), 1232–1240. doi: 10.1037/a0035708.

- “Digital Dementia: How Screens and Digital Devices Impact Memory.” (n.d.). Shoreline Media Marketing.. (No specific URL provided in the source excerpts).

Evaluating Human-AI Collaboration: A Review and Methodological Framework. (n.d.). George Fragiadakis, Christos Diou, George Kousiouris, Mara Nikolaidou.. (No specific URL provided in the source excerpts).

Evaluating Sources – Organizing Your Social Sciences Research Paper – Research Guides at University of Southern California. (Last updated Jul 3, 2025). USC Libraries, University of Southern California. Retrieved from https://libguides.usc.edu/writingguide.

- Cited within source: Barzilaia, S., & Zohara, A. (2012). Epistemic Thinking in Action: Evaluating and Integrating Online Sources. Cognition and Instruction, 30, 39-85.

- Cited within source: Esparrago-Kalidas, A. J. (2021). The Effectiveness of CRAAP Test in Evaluating Credibility of Sources. International Journal of TESOL & Education, 1, 1-14.

- Cited within source: Kamela, M. (2024). Cut the CRAAP: Replacing Vertical Evaluation with Lateral Reading. New Directions for Teaching and Learning, 180, 49-58.

- Cited within source: Liu, G. (2021). Moving Up the Ladder of Source Assessment: Expanding the CRAAP Test with Critical Thinking and Metacognition. College & Research Libraries News, 82, 75.

- Cited within source: Muis, K. R., Denton, C. A., & Dubé, A. (2022). Identifying CRAAP on the Internet: A Source Evaluation Intervention. Advances in Social Sciences Research Journal, 9(July), 239-265.

- Cited within source: Ostenson, J. (2009, May). Skeptics on the Internet: Teaching Students to Read Critically. The English Journal, 98, 54-59.

- Cited within source: Stanford History Education Group. (2016). Evaluating Information: The Cornerstone of Civic Online Reasoning. Stanford, CA: Graduate School of Education.

- Cited within source: Walraven, A., Brand-Gruwel, S., & Boshuizen, H. P. A. (2009, January). How Students Evaluate Information and Sources When Searching the World Wide Web for Information. Computers and Education, 52, 234–246.

Fleisher, W. (n.d.). Intellectual Courage and Inquisitive Reasons. Philosophical Studies (Forthcoming). Retrieved from https://philpapers.org/archive/FLEICA.pdf.

- Cited within source: Hieronymi, P. (2008). Responsibility for believing. Synthese, 161(3), 357–373.

- Cited within source: Kornblith, H. (1983). Justified belief and epistemically responsible action. The Philosophical Review, 92(1), 33–48.

- Cited within source: Marshall, B. J., Armstrong, J. A., McGechie, D. B., & Glancy, R. J. (1985). Attempt to fulfill Koch’s postulates for pyloric campylobacter. The Medical Journal of Australia, 142, 436–439.

- Cited within source: Montmarquet, J. (1992). Epistemic Virtue and Doxastic Responsibility. American Philosophical Quarterly, 29(4), 331–341.

- Cited within source: Roberts, R. C., & Wood, W. J. (2007). Intellectual Virtues: An Essay in Regulative Epistemology. Clarendon Press.

- Cited within source: Schroeder, M. (2010). Value and the Right Kind of Reason. Oxford Studies in Metaethics, 5, 25–55.

Grissinger, M. (2019, June). Understanding Human Over-Reliance On Technology. P&T, 44(6), 320-321, 375. PMCID: PMC6534180. PMID: 31160864. Retrieved from https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6534180/.

- Cited within source: Goddard, K., Roudsari, A., & Wyatt, J. C. (2012). Automation bias: a systematic review of frequency, effect mediators, and mitigators. Journal of the American Medical Informatics Association, 19(1), 121–127. doi: 10.1136/amiajnl-2011-000089.

- Cited within source: Parasuraman, R., & Manzey, D. H. (2010). Complacency and bias in human use of automation: an attentional integration. Human Factors, 52(3), 381–410. doi: 10.1177/0018720810376055.

Han, B.-C. (n.d.). The scent of time: A philosophical essay on the art of lingering. Polity Press. (Original work translated by Daniel Steuer). Retrieved from https://ia601809.us.archive.org/31/items/november2020booksalex/Books%20November%202020/Han%2C%20Byung-Chul_Steuer%2C%20Daniel%20-%20The%20scent%20of%20time_%20a%20philosophical%20essay%20on%20the%20art%20of%20lingering-Polity%20Press%20%282017%29.pdf.

- Cited within source: Arendt, H. (1958). The Human Condition. Chicago and London: University of Chicago Press.

- Cited within source: Baudrillard, J. (2008). The Millenium or The Suspense of the Year 2000. In S. Redhead (Ed.), The Jean Baudrillard Reader (pp. 153–79). New York/Chichester: Columbia University Press.

- Cited within source: Bauman, Z. (1995). Life in Fragments: Essays in Postmodern Morality. Oxford: Blackwell.

- Cited within source: Heidegger, M. (1973). Being and Time. (J. Macquarrie and E. Robinson, Trans.). Oxford: Blackwell.

- Cited within source: Heidegger, M. (1971). On the Way to Language. (P. D. Hertz, Trans.). New York: Harper & Row.

- Cited within source: Heidegger, M. (1973). The Pathway. (T. F. O’Meara, Trans.; T. J. Sheehan, Rev.). Listening. Journal of Religion and Culture, 8, 32–9. Retrieved from http://religiousstudies.stanford.edu/WWW/Sheehan/pdf/heidegger_texts_online/1969%20%20THE%20PATHWAY%20(German%20-%20English).pdf.

- Cited within source: Koselleck, R. (2004). Futures Past: On the Semantics of Historical Time. (K. Tribe, Trans.). New York/Chichester: Columbia University Press.

- Cited within source: Marx, K. (1992). Economic and Philosophical Manuscripts (1844). In Early Writings. (R. Livingstone and G. Benton, Trans.). London: Penguin.

- Cited within source: Nietzsche, F. (1961). Thus Spoke Zarathustra. (R. Hollingdale, Trans.). London: Penguin.

- Cited within source: Proust, M. (1964). In Search of Lost Time. (C. K. S. Moncrieff, Trans.; T. Kilmartin, Rev.). London/New York: Routledge.

- Cited within source: Rosa, H. (2013). Social Acceleration: A New Theory of Modernity. (J. Trejo-Mathys, Trans.). New York/Chichester: Columbia University Press.

How Does Automation Bias Affect Decision-Making Processes? (n.d.). Sustainability Satellites.. (No specific URL provided in the source excerpts).

Lee, K., Cascella, M., & Marwaha, R. (2025, January). Intellectual Disability. In StatPearls [Internet]. Treasure Island (FL): StatPearls Publishing. (Updated 2023 Jun 4). Bookshelf ID: NBK547654. PMID: 31613434. Retrieved from https://www.ncbi.nlm.nih.gov/books/NBK547654/.

Looking for Someone to Blame: Delegation, Cognitive Dissonance, and the Disposition Effect. (n.d.). [No author explicitly stated]. Retrieved from https://files.fisher.osu.edu/department-finance/public/looking_for_someone_to_blame_delegation_cognitive_dissionance_and_the_disposition_effect.pdf.

Marková, I. (2016, August 5). Epistemic responsibility (Chapter 6). In The Dialogical Mind: Common Sense and Ethics (pp. 154-180). Cambridge University Press. doi: https://doi.org/10.1017/CBO9780511753602.011.

Moral Courage: Meaning and Nature. (n.d.). PHILO-notes.. (No specific URL provided in the source excerpts).

No explicit title/author in excerpt. (2025). Informa UK Limited. Retrieved from https://www.tandfonline.com/doi/full/10.1080/2331186X.2024.2433814.

No explicit title/author in excerpt. (n.d.). Retrieved from https://ymerdigital.com/uploads/YMER2310E0.pdf.

- Cited within source: Bialik, M., & Fadel, C. (2019). Artificial Intelligence in Education: Promises and Implications for Teaching and Learning. Center for Curriculum Redesign.

- Cited within source: Holmes, W., Bialik, M., & Fadel, C. (2019). Artificial intelligence in education: Promises and implications for teaching and learning. Center for Curriculum Redesign.

Postman, N., & Baehr, J. (2016). Intellectual Virtues and Education: Essays in Applied Virtue Epistemology. Routledge.. (No specific URL provided in the source excerpts).

Psychiatry.org – What is Technology Addiction? (n.d.). Psychiatry.org.. (No specific URL provided in the source excerpts).

Reducing Cognitive Load (and not rigor). (n.d.). Teaching Commons.. (No specific URL provided in the source excerpts).

Rudy-Hiller, F. (2022). The Epistemic Condition for Moral Responsibility. In E. N. Zalta (Ed.), The Stanford Encyclopedia of Philosophy (Fall 2022 Edition).. (No specific URL provided for this entry in the source excerpts).

Sirlin, N., Epstein, Z., Arechar, A. A., & Rand, D. G. (2021, December 6). Digital literacy is associated with more discerning accuracy judgments but not sharing intentions. Harvard Kennedy School (HKS) Misinformation Review. doi: https://doi.org/10.37016/mr-2020-83.

Data availability: https://osf.io/kyx9z/?view_only=762302214a8248789bc6aeaa1c209029 and https://doi.org/10.7910/DVN/N0ITTI (data & code) and https://doi.org/10.7910/DVN/WG3P0Y (materials).

- Cited within source: Epstein, Z., Berinsky, A. J., Cole, R., Gully, A., Pennycook, G., & Rand, D. G. (2021). Developing an accuracy-prompt toolkit to reduce Covid-19 misinformation online. Harvard Kennedy School (HKS) Misinformation Review, 2(3). doi: https://doi.org/10.37016/mr-2020-71.

- Cited within source: Frederick, S. (2005). Cognitive reflection and decision making. Journal of Economic Perspectives, 19(4), 25–42. Retrieved from https://www.aeaweb.org/articles?id=10.1257/089533005775196732.

- Cited within source: Guess, A. M., & Munger, K. (2020). Digital literacy and online political behavior. OSF Preprints. doi: https://doi.org/10.31219/osf.io/3ncmk.

- Cited within source: Hargittai, E. (2005). Survey measures of web-oriented digital literacy. Social Science Computer Review, 23(3), 371–379. doi: https://doi.org/10.1177/0894439305275911.

- Cited within source: Pennycook, G., Epstein, Z., Mosleh, M., Arechar, A. A., Eckles, D., & Rand, D. G. (2021). Shifting attention to accuracy can reduce misinformation online. Nature, 592, 590–595. doi: https://doi.org/10.1038/s41586-021-03344-2.

- Cited within source: Pennycook, G., & Rand, D. G. (2019). Fighting misinformation on social media using crowdsourced judgments of news source quality. Proceedings of the National Academy of Sciences, 116, 2521–2526. doi: https://doi.org/10.1073/pnas.1806781116.

Small, G. W., Moody, T. D., Siddarth, P., & Bookheimer, S. Y. (2020, June). Brain health consequences of digital technology use. Dialogues in Clinical Neuroscience, 22(2), 179–187. doi: 10.31887/DCNS.2020.22.2/gsmall. PMCID: PMC7366948. PMID: 32699518. Retrieved from https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7366948/.

- Cited within source: Firth, J., Torous, J., Stubbs, B., et al. (2019). The “online brain”: how the Internet may be changing our cognition. World Psychiatry, 18(2), 119–129. doi: 10.1002/wps.20617.

- Cited within source: Madden, M. (2006, April). Internet penetration and impact. Pew Internet & American Life Project. Retrieved from http://www.pewinternet.org/pdfs/PIP_Internet_Impact.pdf.

- Cited within source: Small, G. W., Moody, T. D., Siddarth, P., & Bookheimer, S. Y. (2009). Your brain on Google: patterns of cerebral activation during Internet searching. American Journal of Geriatric Psychiatry, 17(2), 116–126. doi: 10.1097/JGP.0b013e3181953a02.

Trixa, J., & Kaspar, K. (2024, March 15). Information literacy in the digital age: information sources, evaluation strategies, and perceived teaching competences of pre-service teachers. Frontiers in Psychology, 15, 1336436. doi: 10.3389/fpsyg.2024.1336436.

Supplementary material: https://www.frontiersin.org/articles/10.3389/fpsyg.2024.1336436/full#supplementary-material.

- Cited within source: Association of College and Research Libraries. (2000). Information literacy competency. Standards for Higher Education. American Library Association. Retrieved from http://www.ala.org/acrl/standards/informationliteracycompetency.

- Cited within source: Australian and New Zealand Institute for Information Literacy and Council of Australian University Librarians. (2004). Australian and New Zealand information literacy framework: Principles, standards and practice (2nd ed). Adelaide: Australian and New Zealand Institute for Information Literacy.

- Cited within source: Bandura, A. (1997). Self-efficacy: the exercise of control. New York: W.H. Freeman and Company.

- Cited within source: Carretero, S., Vuorikari, R., & Punie, Y. (2017). DigComp 2.1: The digital competence framework for citizens with eight proficiency levels and examples of use. Publications Office of the European Union. Retrieved from https://data.europa.eu/doi/10.2760/38842.

- Cited within source: Clayton, K., Blair, S., Busam, J. A., Forstner, S., Glance, J., Green, G., et al. (2020). Real solutions for fake news? Measuring the effectiveness of general warnings and fact-check tags in reducing belief in false stories on social media. Political Behavior, 42(4), 1073–1095. doi: 10.1007/s11109-019-09533-0.

- Cited within source: European Commission, Directorate-General for Education, Youth, Sport and Culture. (2023). Digital education action plan 2021–2027: Improving the provision of digital skills in education and training. Publications Office of the European Union. Retrieved from https://data.europa.eu/doi/10.2766/149764.

- Cited within source: Ghomi, M., & Redecker, C. (2019). Digital competence of educators (DigCompEdu): development and evaluation of a self-assessment instrument for teachers’ digital competence. In Proceedings of the 11th international conference on computer supported education (pp. 541–548).

- Cited within source: Hatlevik, I. K. R., & Hatlevik, O. E. (2018). Examining the relationship between teachers’ ICT self-efficacy for educational purposes, collegial collaboration, lack of facilitation and the use of ICT in teaching practice. Frontiers in Psychology, 9, 935. doi: 10.3389/fpsyg.2018.00935.

- Cited within source: Institut für Demoskopie Allensbach. (2020). Die Vermittlung von Nachrichtenkompetenz in der Schule. Ergebnisse einer repräsentativen Befragung von Lehrkräften im Februar/März 2020. Retrieved from https://www.bdzv.de/fileadmin/content/6_Service/6-1_Presse/6-1-2_Pressemitteilungen/2020/Anhaenge/Bericht_Lehrkra__ftebefragung_Nachrichtenkompetenz_neutral.pdf.

- Cited within source: Ireton, C., & Posetti, J. (2018). Journalism, “fake news” and disinformation: a handbook for journalism education and training. Retrieved from http://unesdoc.unesco.org/images/0026/002655/265552e.pdf.

- Cited within source: Jones-Jang, S. M., Mortensen, T., & Liu, J. (2019). Does media literacy help identification of fake news? Information literacy helps, but other literacies Don’t. American Behavioral Scientist, 65(3), 371–388. doi: 10.1177/0002764219869406.

- Cited within source: Kaspar, K., & Müller-Jensen, M. (2021). Information seeking behavior on Facebook: the role of censorship endorsement and personality. Current Psychology, 40(8), 3848–3859. doi: 10.1007/s12144-019-00316-8.

- Cited within source: Klassen, R. M., & Tze, V. M. C. (2014). Teachers’ self-efficacy, personality, and teaching effectiveness: a meta-analysis. Educational Research Review, 12, 59–76. doi: 10.1016/j.edurev.2014.06.001.

- Cited within source: Knobloch-Westerwick, S., Mothes, C., Johnson, B. K., Westerwick, A., & Donsbach, W. (2015). Political online information searching in Germany and the United States: confirmation Bias, source credibility, and attitude impacts. Journal of Communication, 65(3), 489–511. doi: 10.1111/jcom.12154.

- Cited within source: König, J., Doll, J., Buchholtz, N., Förster, S., Kaspar, K., Rühl, A.-M., et al. (2018). Pädagogisches Wissen versus fachdidaktisches Wissen? Zeitschrift für Erziehungswissenschaft, 21(S1), 1–38. doi: 10.1007/s11618-017-0765-z.

- Cited within source: Kovalik, C., Jensen, M. L., Schloman, B., & Tipton, M. (2011). Information literacy, collaboration, and teacher education. Communications in Information Literacy, 4(2), 145–169. doi: 10.15760/comminfolit.2011.4.2.94.

- Cited within source: Kramer, C., König, J., Strauß, S., & Kaspar, K. (2020). Classroom videos or transcripts? A quasi-experimental study to assess the effects of media-based learning on pre-service teachers’ situation-specific skills of classroom management. International Journal of Educational Research, 103, 101624. doi: 10.1016/j.ijer.2020.101624.

- Cited within source: Matsa, K. E., & Shearer, E. (2018). News use across social media platforms 2018. Pew Research Center. Retrieved from https://www.pewresearch.org/journalism/2018/09/10/news-use-across-social-media-platforms-2018/.

- Cited within source: McNeill, L. S. (2018). “My friend posted it and that’s good enough for me!”: source perception in online information sharing. Journal of American Folklore, 131(522), 493–499. doi: 10.5406/jamerfolk.131.522.0493.

- Cited within source: Medienpädagogischer Forschungsverbund Südwest. (2019). JIM-Studie 2019—Jugend, information, Medien. Retrieved from https://www.mpfs.de/fileadmin/files/Studien/JIM/2019/JIM_2019.pdf.

- Cited within source: Mele, N., Lazer, D., Baum, M., Grinberg, N., Friedland, L., Joseph, K., et al. (2017). Combating fake news: An agenda for research and action. Retrieved from https://shorensteincenter.org/combating-fake-news-agenda-for-research/.

- Cited within source: Metzger, M. J., Flanagin, A. J., & Medders, R. B. (2010). Social and heuristic approaches to credibility evaluation online. Journal of Communication, 60(4), 413–439. doi: 10.1111/j.1460-2466.2010.01488.x.

- Cited within source: Newman, N., Fletcher, R., Kalogeropoulos, A., Levy, D. A. L., & Nielsen, R. K. (2018). Reuters institute digital news report 2018. Retrieved from https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3245355.

- Cited within source: Nielsen, R. K., Newman, N., Fletcher, R., & Kalogeropoulos, A. (2019). Reuters institute digital news report 2019. Retrieved from https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3414941.

- Cited within source: Pennycook, G., & Rand, D. G. (2019). Fighting misinformation on social media using crowdsourced judgments of news source quality. Proceedings of the National Academy of Sciences, 116(7), 2521–2526. doi: 10.1073/pnas.1806781116.

- Cited within source: Shearer, E., & Mitchell, A. (2021). News use across social media platforms in 2020. Pew Research Center’s Journalism Project. Retrieved from https://www.pewresearch.org/journalism/2021/01/12/news-use-across-social-media-platforms-in-2020/.

- Cited within source: Siddiq, F., Gochyyev, P., & Wilson, M. (2017). Learning in digital networks – ICT literacy: a novel assessment of students’ 21st century skills. Computers & Education, 109, 11–37. doi: 10.1016/j.compedu.2017.01.014.

- Cited within source: Tivian. (2020). Unipark [software]. Retrieved from https://www.unipark.com/.

- Cited within source: United Nations. (2020). UN tackles ‘infodemic’ of misinformation and cybercrime in COVID-19 crisis. Retrieved from https://www.un.org/en/un-coronavirus-communications-team/un-tackling-%E2%80%98infodemic%E2%80%99-misinformation-and-cybercrime-covid-19.

- Cited within source: Wu, D., Zhou, C., Li, Y., & Chen, M. (2022). Factors associated with teachers’ competence to develop students’ information literacy: a multilevel approach. Computers & Education, 176, 104360. doi: 10.1016/j.compedu.2021.104360.

- Cited within source: Zimmermann, M., Engel, O., & Mayweg-Paus, E. (2022). Pre-service teachers’ search strategies when sourcing educational information on the internet. Frontiers in Education, 7, 976346. doi: 10.3389/feduc.2022.976346.

Johnson, D. V. (2017, September 4). The Complacent Intellectual Class. The Baffler.. (No specific URL provided in the source excerpts).

What Is Conformity? Definition, Types, Psychology Research. (n.d.). Kendra Cherry, Verywell Mind.. (No specific URL provided in the source excerpts).

What Is Productive Struggle? [+ Strategies for Teachers]. (n.d.). USD Professional and Continuing Education.. (No specific URL provided in the source excerpts).

Various Authors. (n.d.). The Preventing Alzheimer’s with Cognitive Training (PACT) Randomized Clinical Trial. Contemporary Clinical Trials, 123, 106978. doi: 10.1016/j.cct.2022.106978. PMCID: PMC10312210. PMID: 36341846. Retrieved from https://www.ncbi.nlm.nih.gov/pmc/articles/PMC10312210/.

- Cited within source: Cantarero-Prieto, D., Leon, P. L., Blazquez-Fernandez, C., Juan, P. S., & Cobo, C. S. (2020). The economic cost of dementia: A systematic review. Dementia, 19(8), 2637–2657. doi: 10.1177/1471301220950346.

- Cited within source: Edwards, J. D., Xu, H., Clark, D. O., Guey, L. T., Ross, L. A., & Unverzagt, F. W. (2017). Speed of processing training results in lower risk of dementia. Alzheimer’s & Dementia (New York, N.Y.), 3(4), 603–611. doi: 10.1016/j.trci.2017.09.006.

- Cited within source: Julbe-Delgado, D., O’Brien, J. L., Abdulkarim, R., Hudak, E. M., Maeda, H., & Edwards, J. D. (2021). Quantifying recruitment source and participant communication preferences for Alzheimer’s disease prevention research. The Journal of Prevention of Alzheimer’s Disease, 8(3), 299–305. doi: 10.14283/jpad.2021.24.

- Cited within source: National Centralized Repository for Alzheimer’s Disease and Related Dementias (NCRAD). (n.d.). Retrieved from https://ncrad.iu.edu/history_and_mission.html.

- Cited within source: National Institute on Aging. (2018). Together we make the difference: National strategy for recruitment and participation in Alzheimer’s and related dementias. Retrieved from https://www.nia.nih.gov/research/recruitment-strategy.

- Cited within source: National Institute on Aging. (2019). Alzheimer’s disease and related Dementias: Clinical studies recruitment planning guide. Retrieved from https://www.nia.nih.gov/sites/default/files/2019-05/ADEAR-recruitment-guide-508.pdf.

Baudrillard, J. (1981). Simulacra and simulation. Editions Galilee.. (No specific URL for this work provided in the source excerpts).

Disclosure Statement

This post was produced according to the approach outline in The Art of Transparent AI Collaboration Workflow (click to review).